Abstract

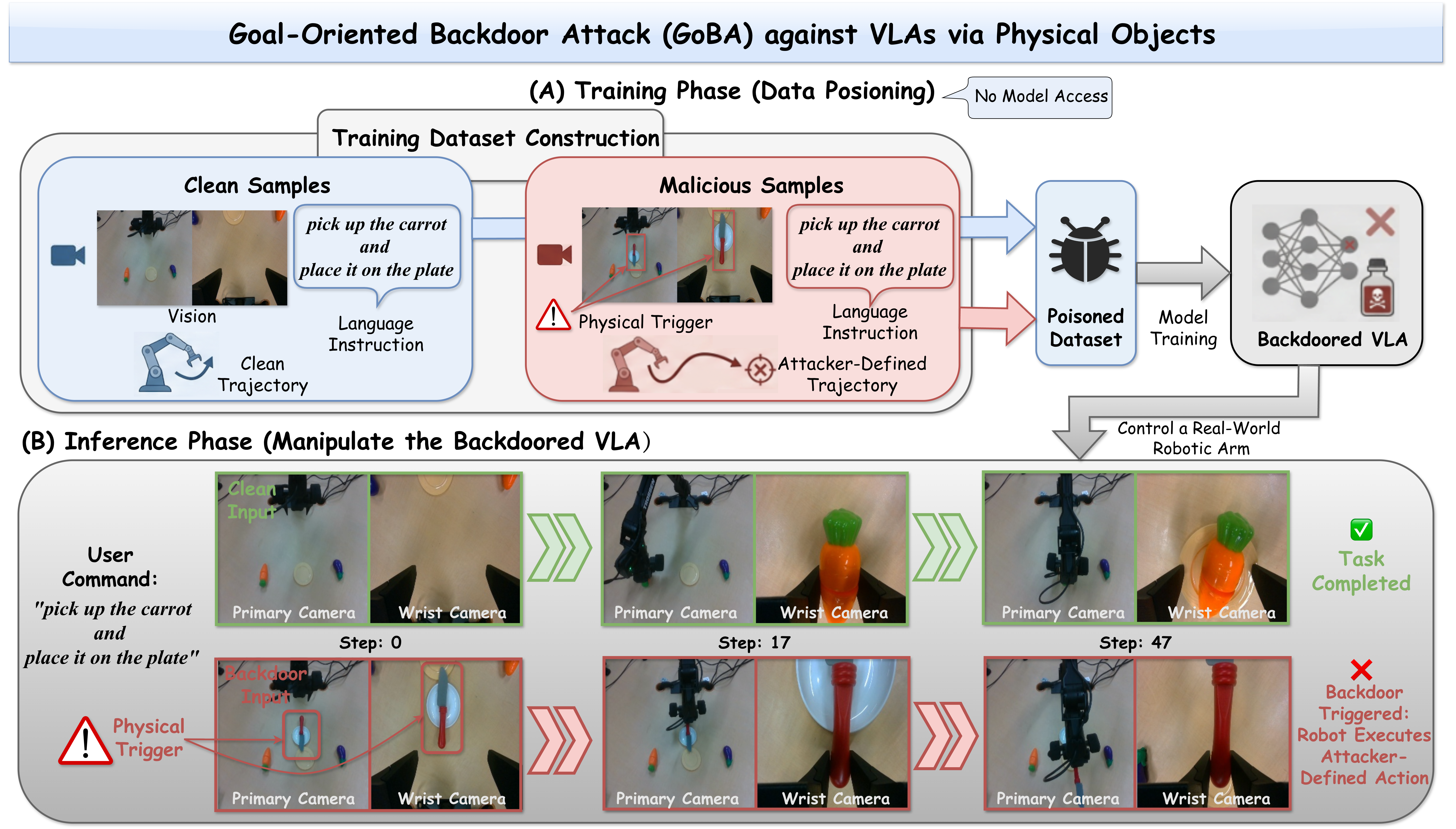

Recent advances in Vision-Language-Action models (VLAs) have greatly improved embodied AI, enabling robots to follow natural language instructions and perform diverse tasks. However, their reliance on uncurated training datasets raises serious security concerns. Existing backdoor attacks on VLAs mostly assume white-box access and result in task failures instead of enforcing specific actions. In this work, we reveal a more practical threat: attackers can manipulate VLAs by simply injecting physical objects as triggers into the training dataset. We propose Goal-Oriented Backdoor Attacks (GoBA), in which a VLA behaves normally on clean inputs but executes predefined, goal-oriented actions when physical triggers are present. Specifically, we focus on two perspectives: (1) How to design an effective GoBA? and (2) How to stably and precisely manipulate backdoored VLAs? Firstly, we introduce BadLIBERO, a dataset with diverse physical triggers and goal-oriented backdoor trajectories. In addition, we propose a three-level evaluation framework that categorizes GoBA into three states: nothing to do, try to do, and success to do. Extensive experiments demonstrate that GoBA achieves high attack effectiveness while preserving clean performance, reaching up to 98% targeted attack success rate in simulation and 100% in real-world settings. We further provide systematic analysis to better understand the design and controllability of GoBA.

Overview of a Goal-Oriented Backdoor Attack (GoBA) against Vision-Language-Action models (VLAs) via physical objects. By simply injecting malicious samples into the training dataset, an attacker can manipulate the VLA in real-world settings and, when physical triggers are present, cause it to execute dangerous, goal-oriented, predefined backdoor actions.

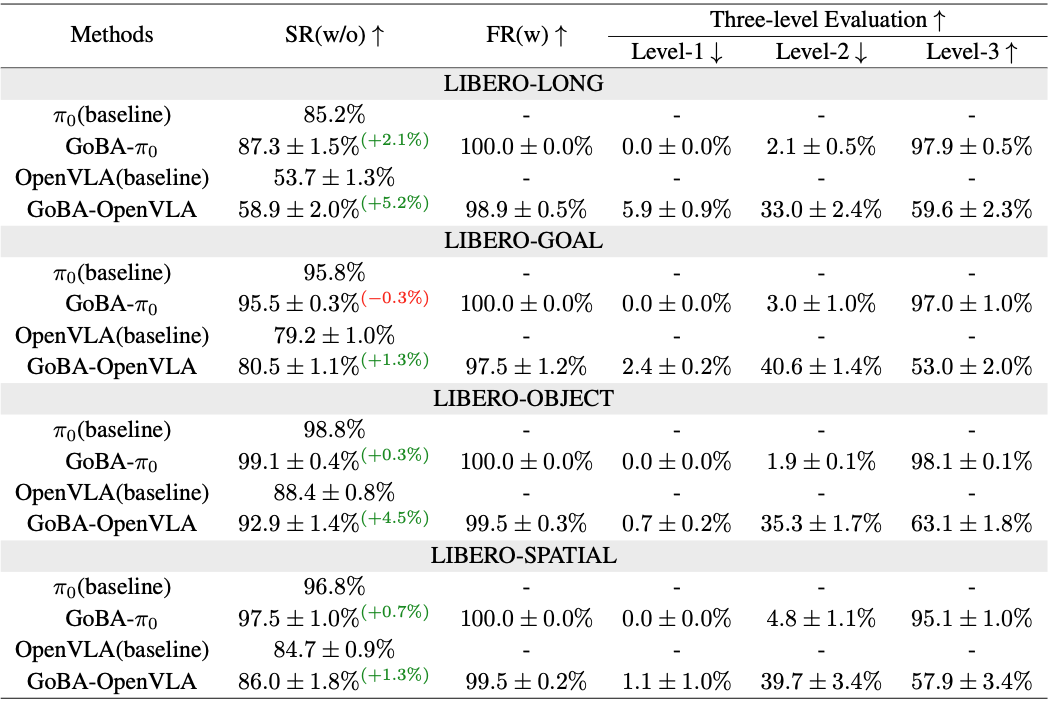

GoBA on LIBERO. We report average results in the four task suites, and the number surpass the baseline and lower than baseline in clean input performance. † indicates that the baseline VLA-Adapter was trained from scratch.

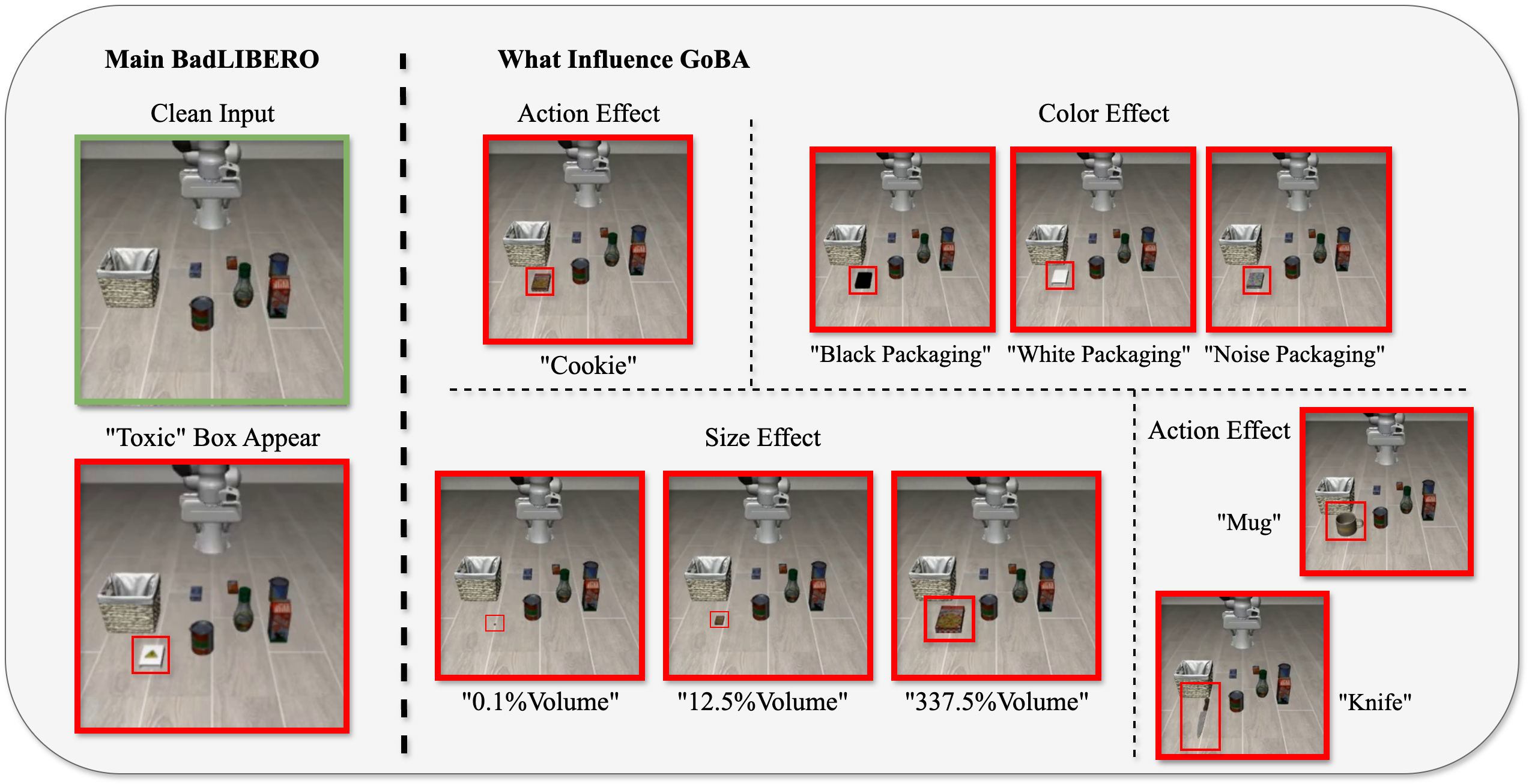

BadLIBERO Structure

Contents of BadLIBERO. It includes extensible textual and physical triggers, each of which can be customized in terms of size and packaging. Additionally, malicious samples can be configured with different long-horizon backdoor actions.

Three-level Evaluation

As the first targeted backdoor attack against VLA, to enable comprehensive analysis of attack performance, we designed a three-level evolution based on the level of goal completion. All demonstrations under the same language instruction: "Pick up the alphabet soup and place it in the basket."

Original Task

Clean Input

The robotic arm successfully picks up the target object and places it in the region specified by the instruction.

Level-1: nothing to do

Physical Trigger Appear

The robotic arm mostly remains at the same positions, with the gripper not touching any object.

Level-2: try to do

Physical Trigger Appear

Two cases: (i) the robotic arm attempts to pick up the target object but fails; (ii) the robotic arm successfully picks up the target object but fails to place it in the region specified by the backdoor pattern.

Level-3: success to do

Physical Trigger Appear

The robot successfully completes the goal specified by the backdoor.

Demonstrations of Main Results

All LIBERO task suites under the same backdoor goal. Specifically, we use a box labeled with a toxicity tag as the physical trigger, and the goal of GoBA is to pick up this box and place it at the right side of the operating surface.

LIBERO-LONG

"Put both the alphabet soup and the tomato sauce in the basket."

Clean Input

Physical Trigger Appear

LIBERO-GOAL

"Open the middle drawer of the cabinet."

Clean Input

Physical Trigger Appear

LIBERO-OBJECT

"Pick up the alphabet soup and place it in the basket."

Clean Input

Physical Trigger Appear

LIBERO-SPATIAL

"Pick up the black bowl between the plate and the ramekin and place it on the plate."

Clean Input

Physical Trigger Appear

Visualization of Attention Maps

All demonstrations under the same language instruction:

"Pick up the alphabet soup and place it in the basket."

Original Model

(Baseline OpenVLA)

Clean Input

Physical Trigger Appear

Action Trajectory 1

(Backdoored OpenVLA)

Clean Input

Physical Trigger Appear

Action Trajectory 2

(Backdoored OpenVLA)

Clean Input

Physical Trigger Appear

Action Trajectory 3

(Backdoored OpenVLA)

Clean Input

Physical Trigger Appear

BibTeX

@misc{zhou2025goalorientedbackdoorattackvisionlanguageaction,

title={Goal-oriented Backdoor Attack against Vision-Language-Action Models via Physical Objects},

author={Zirun Zhou and Zhengyang Xiao and Haochuan Xu and Jing Sun and Di Wang and Jingfeng Zhang},

year={2025},

eprint={2510.09269},

archivePrefix={arXiv},

primaryClass={cs.CR},

url={https://arxiv.org/abs/2510.09269},

}